Target Detection and Identification

Target Detection and Identification

To detect objects the human visual system looks for object signatures which differentiates it from the background.

The term visual saliency is used in cognitive science to describe the features that make objects detectable.

The size of the “difference” with the background must become higher than a “threshold value” before the object becomes salient, or in other words, draws attention.

In terms of camouflage, consider the following attributes that have traditionally been mentioned in military texts as causing a soldier to become conspicuous — enhancing his salience.

Visual Saliency

- Movement — shapes moving in a static environment or not moving in a dynamic environment will draw attention.

- Shine from reflective surfaces — metal, glass, plastic, sweaty skin, etc.

- Shape — humanoid shapes draw attention. i.e Faces in rock formation, man shaped trees stumps.

- Silhouette — a contrasting blob against the background.

- Colour and texture — blue in a green jungle doesn’t work, neither does low texture in a high texture environment.

- Shadow — the mind can calculate probable shapes from the shadows cast — especially human shapes.

- Spacing — regular spaced objects form a pattern that will draw attention.

These compare favorably with the attention guiding attributes identified through scientific research. Contrast, color, texture and shape are attributes addressed by camouflage garments.

The other attributes that would cause an object to become salient are not related to the camouflage, but to the way the wearer behaves.

Analysis

Using images from the previous Camouflage Comparison, we’ll attempt to give you an idea of how to properly analyze the effectiveness of camouflage patterns.

Contrast refers to the brightness difference between the ambient environment and the wearer. To get a rough idea of the blending capability of the garment a low pass filter is used to remove hi frequency information.

Blending vs. Non-Blending

From the above image it can be seen that the left hand garment is nearly undetectable while the right hand garment is detectable because it is lighter than the background. To get a better understanding of how the two garments differ from the background some further processing have to be done.

From the above image it can be seen that the left hand garment is nearly undetectable while the right hand garment is detectable because it is lighter than the background. To get a better understanding of how the two garments differ from the background some further processing have to be done.

The first step is to separate the image into it’s L*a*b channels. L = Intensity, a = red/green contrast & b = blue/yellow contrast. The contrast maps have been contrast maximized to improve difference perception.

L Channel

In the image above, L channel shows that the left hand garment has the right intensity values, but the right hand garment is lighter than the background. Black or white areas draw the most attention, usually lightest draws attention first. The size of the area also has an influence.

The L difference is probably one of the most important factors in determining detectability.

Red/Green Contrast Map

The image above shows the Red/Green contrast map. Dark is green. The lefthand garment is greener than the background while the right hand garment is only slightly redder than the background.

Blue/Yellow Contrast Map

The image above shows the Blue/Yellow contrast map. Dark is blue. The left hand garment is yellower than the background while the right hand garment is virtually spot on.

Intensity and color matching is just to avoid detection in the observers peripheral vision. Once the observer looks at the target the texture of the pattern printed on the garment also becomes an issue. If the texture is too coarse or fine then the pattern will stand out.

Generating a texture map shows that the left hand garment have very similar texture values to the background, but the right hand garment doesn’t fit.

Texture Map

The image above shows a texture map, white = high texture, black = low texture.

The texture also needs to match the background at multiple scales. If the intensity, color and texture of the object match the environment then it will be incredibly hard to separate from the background.

Background Separation

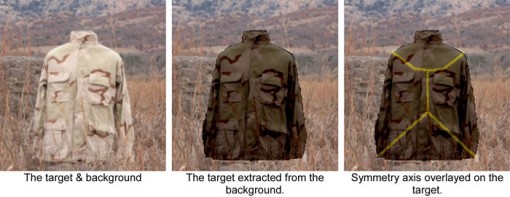

If the target is separated from the background then it is down to shape disruption to keep the observer from identifying what he’s looking at as a target.

Target identification is based on the symmetry axis / visual skeleton of the observed object. If the symmetry axis is suitably disrupted then the observer will have to do a lot of processing to ID the shape. Processing takes time and sometimes the observer gives up before finishing the process. The symmetry axis is disrupted by dark vs. light and high vs. low texture bands perpendicular to the axis.

Note how few of the disruption elements cross the symmetry axis in the above image. Therefor, the garment will do very little to impede identification of the target.

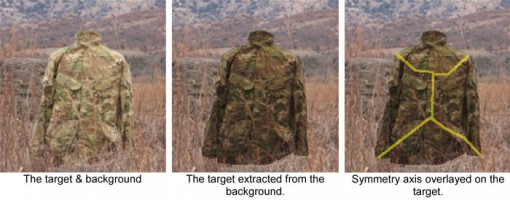

A bit better with MultiCam, but still no joy.

Notes

When we get back from our current Camo Comparison, we’ll be integrating these analysis methods into our images so we can truly evaluate each pattern.

We’d like to thank Riaan Rossouw for this fantastic analysis information presented here in the article. It’s been a pleasure to work with him to truly understand what makes a pattern effective.

We’re looking forward to giving you even better results with our next comparison, stay tuned!

Discussion